The Complete Guide to Data Warehouse Migration: Part 1, Strategy and Planning

Data teams are being asked to carry more complexity than ever. Analytics is no longer limited to dashboards and monthly reporting cycles, and AI is raising the bar even further. Businesses expect modern data platforms to support more than historical reporting. They need to enable use cases like demand forecasting, real-time operational alerts, personalized customer recommendations, and AI-driven decision support across the business. Those insights also need to show up where work actually happens: in warehouses, BI tools, internal apps, customer-facing products, and automated workflows.

But many organizations are still trying to meet these expectations on top of legacy systems that were never built for this level of speed, scale, or flexibility. As data volumes grow, the cracks start to show. Queries slow down, pipelines miss SLAs, batch windows spill into business hours, and every new request adds more strain to already fragile architecture. Requirements like real-time ingestion, self-service analytics, and AI-ready data pipelines do not just expose technical debt—they make it impossible to ignore.

That is why data warehouse migration is rarely just a lift-and-shift exercise. The moment teams begin moving data, they uncover everything the old environment was hiding: brittle pipelines, inconsistent metric definitions, undocumented dependencies, duplicated logic, and low-confidence data. Migration forces those issues into the open. Done well, that is not a setback—it is the opportunity. It gives organizations a reason to clean up what was tolerated for too long and rebuild on stronger foundations.

More importantly, migration is a catalyst for modernization. It enables organizations to rethink architecture, streamline and consolidate data, and accelerate analytics delivery. For data leaders, the question is no longer just technical feasibility—migration has become a foundational step in digital transformation. By bridging legacy systems with modern, cloud-based environments, it lays the groundwork for advanced analytics, AI-driven use cases, and more efficient, data-informed operations.

What is Data Warehouse Migration?

Data warehouse migration is the process of moving data, workloads, and analytical pipelines from legacy or on-premises systems to modern environments such as cloud, hybrid, or lakehouse architectures. But in practice, it is much more than moving data from one platform to another.

A successful migration reshapes how data is stored, modeled, governed, and delivered to the business. It affects architecture decisions, pipeline design, performance, cost, security, and the overall reliability of analytics. Unlike a simple infrastructure upgrade, migration is an opportunity to modernize the data ecosystem so it can scale with growing demand and support new business and AI use cases.

This guide provides a practical framework for planning and executing a modern data warehouse migration, covering architecture choices, pipeline strategies, tooling considerations, and common pitfalls so teams can migrate with confidence and deliver value from day one.

Why Organizations Migrate Data Warehouses

The business case for data warehouse modernization in 2026 isn't about chasing trends. It's about reclaiming the ability to compete.

1. Technical Limitations of Legacy Systems

Enterprises initiate data warehouse migrations when legacy systems can no longer meet the speed, scale, and flexibility demanded by modern analytics. Many traditional warehouses are approaching end-of-life — without security patches, performance updates, or vendor support — while simultaneously failing to integrate with contemporary cloud ecosystems, streaming platforms, and APIs. Decades of siloed development compound the problem: systems built on incompatible data formats, proprietary structures, and closed communication protocols produce fragmented infrastructure that resists consolidation and makes consistent, governed analytics across the organization structurally difficult to achieve.

The performance consequences are just as significant. Query performance degrades as data volumes outgrow original capacity estimates. Batch-processing architectures built for small analyst teams cannot support the concurrency, speed, or workload intensity that modern BI, real-time analytics, and AI initiatives require. McKinsey research shows organizations spend up to 70% of their IT budgets simply keeping these systems operational — a resource trap that prevents strategic investment while delivering no competitive return. The engineers who should be building data products are maintaining infrastructure instead.

2. Business Imperatives

I. Rising Costs and Unsustainable Legacy Complexity

Legacy systems become increasingly expensive and difficult to maintain as they age. Hardware refresh cycles, licensing, and specialized talent drive up costs while delivering diminishing returns. At the same time, growing data volumes are pushing batch-based architectures beyond their limits, making scaling both inefficient and costly.

Cloud transforms IT spending from large upfront capital investments (CapEx) to usage-based operating expenses (OpEx), aligning costs with actual demand and reducing the risk of underutilized infrastructure.

II. AI and Real-Time Use Cases Expose Data Limitations

AI is rapidly becoming a business requirement rather than an experimental initiative. As organizations move AI and machine learning into production, they need data platforms that support low-latency access, real-time pipelines, scalable compute, and API-driven architectures. In many cases, the bottleneck is not the model, but whether the underlying data foundation can deliver trusted, timely, and accessible data at scale.

Legacy systems built on batch processing, siloed data, and rigid scaling struggle to support modern AI use cases such as vector search, real-time decisioning, and frequent model updates. In contrast, cloud-native platforms provide elastic compute, integrated AI capabilities, and flexible architectures that better align with these demands. AI is also accelerating migration itself, with predictive algorithms improving data mapping accuracy and significantly reducing migration timelines.

III. Competitive Pressure to Modernize and Scale

The gap between organizations that have modernized their data infrastructure and those that have not is widening. MIT CISR research across 1,311 global firms found that only 22% are "future ready," having built the digital capabilities to innovate, engage customers, and reduce costs simultaneously. The remaining 78% are falling behind at an accelerating rate as the organizations ahead of them compound their advantages quarter by quarter.

Microsoft Azure research shows organizations migrating to cloud-native PaaS architectures achieve a 228% ROI over three years, a 50% increase in application development speed, and a 40% reduction in infrastructure costs. Those that delay modernization face compounding disadvantages across industries: from real-time fraud detection in finance to personalization in retail and analytics in healthcare.

IV. Business Continuity, Resilience, and Security

Cloud migration is a critical enabler of business continuity and disaster recovery, allowing organizations to maintain operations even in the face of disruptions such as hardware failures, natural disasters, or cyberattacks. By moving data and applications to the cloud, companies benefit from built-in capabilities like automated backups, real-time data replication, and rapid failover mechanisms, which significantly reduce downtime and prevent data loss. Additionally, leading cloud providers invest heavily in advanced security infrastructure, offering features such as encryption, identity and access management, and continuous monitoring to strengthen protection against cyber threats.

V. Foundation for Enterprise Digital Transformation

Cloud serves as the backbone for integrating data, applications, and advanced technologies, enabling real-time decision-making, new business models, and enhanced customer experiences. By reducing the need for manual infrastructure management, cloud adoption allows teams to focus on higher-value activities such as analytics, innovation, and customer experience improvements.

3. Modern Data Platform Capabilities

Modern cloud-native architectures address the structural limitations of legacy systems across four dimensions:

- Cost: Moving from on-premises infrastructure to cloud-based platforms eliminates hardware refresh cycles, reduces licensing overhead, and shifts capital expenditure to a consumption-based model. Organizations typically see 15–40% infrastructure cost savings in the first three years post-migration.

- Scalability: Cloud data warehouses decouple storage from compute, allowing organizations to scale each independently as workloads fluctuate. Enterprises can support massive data volumes and high user concurrency without provisioning for peak capacity year-round — delivering consistent performance at a fraction of the fixed cost.

- Performance: Legacy systems rely on scheduled, batch-based data processing. Modern warehouses support real-time and near-real-time ingestion, enabling faster insights, shorter reporting cycles, and the ability to respond to changing business conditions as they happen rather than hours later.

- Agility: Modern architectures enable self-service BI, faster analytics development cycles, and native support for AI and ML workloads. Teams can move from data to decision without waiting on engineering queues or infrastructure provisioning cycles. AI-powered data migration tooling further accelerates this — predictive algorithms improve data mapping precision by 40%, reducing human error and expediting the migration process itself.

4. When NOT to Migrate

Cloud migration is not always the right move, and poor timing can be as risky as not migrating at all. Before committing, organizations must ensure the foundational conditions for success are in place. Here are some reasons not to migrate:

- Poor Data Quality and Lack of Clarity: Migration transfers data—it does not fix it. If your environment contains inconsistent metrics, undocumented logic, or unreliable pipelines, these issues will scale and become more costly in the cloud.

- Weak or Undefined Governance: Migration amplifies organizational ambiguity. If ownership, definitions, and lineage are unclear, those gaps will expand at scale in a cloud environment. Governance must be established before migration begins, not treated as a post-migration cleanup task.

- Poor Timing with Critical Business Cycles: Migrating during an active product launch, financial close, or regulatory audit compounds operational risk unnecessarily. Migrations should be planned around natural business windows: fiscal quarter-ends, scheduled maintenance periods and aligned with broader strategic initiatives. The disruption is manageable when timed correctly. When timed poorly, it becomes a crisis.

- Insufficient Technical Capability: Organizations routinely underestimate the technical capability required, configuring cloud platforms, managing hybrid environments, applying security controls, and maintaining data consistency throughout transition. Migrating without the right internal capability or a qualified external partner significantly increases the probability of data loss, pipeline failure, and cost overrun.

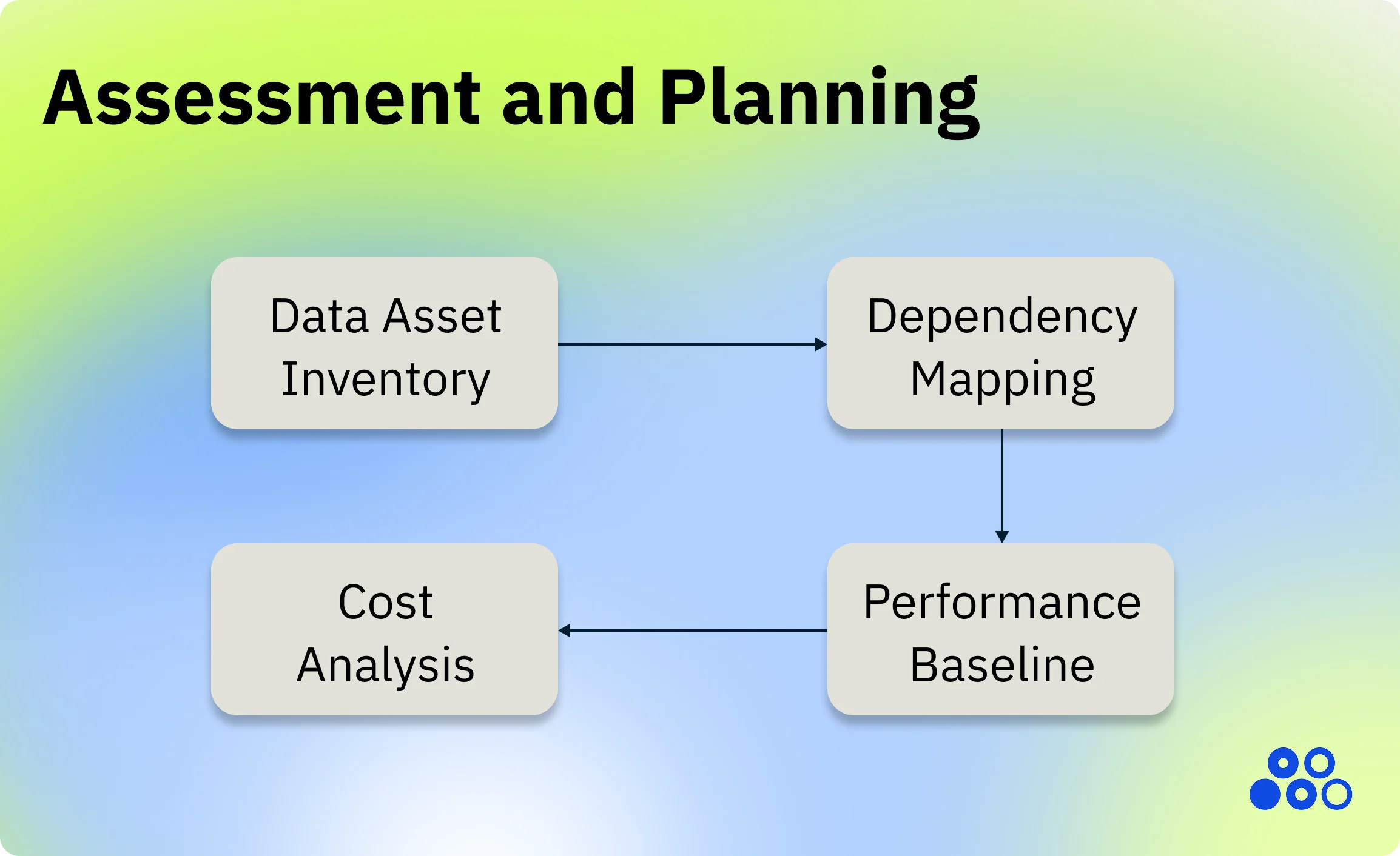

Pre-Migration Assessment & Planning

The first step for an organization before considering moving its data to the cloud, is to understand their current data processes and environment, and its dependencies. They must define their objectives, assess the data complexity and build a structured plan to see what should move and what should be retired.

Data Asset Inventory

The starting point of any migration assessment is a complete, accurate picture of your current data landscape. This involves cataloguing all the disparate data sources in your environment including APIs, flat files, databases and legacy systems into its critical need to prioritize migration sequencing.

Beyond raw source cataloguing, teams should document data volumes, growth patterns, and storage distribution across databases, files, and applications — and map schema complexity including custom data types, stored procedures, and inter-table dependencies that affect migration sequencing.

Dependency Mapping

Data assets do not exist in isolation. Every warehouse has downstream consumers — BI tools, applications, scheduled ETL jobs, API connections, and reporting workflows — that will break if dependencies are not identified and accounted for before migration begins.

Dependency mapping involves identifying which systems rely on others, tracking user access patterns to reveal who uses what data and when, and surfacing legacy constraints. It must trace interdependencies across data pipelines, ETL pipelines, APIs, and downstream reporting to avoid breaking business processes and must evaluate data locked in obsolete platforms or proprietary formats that require special extraction methods.

Thorough mapping documentation is the contract between business stakeholders and the technical implementation team. For example, during an ERP implementation managed by Oracle consulting teams, this document ensures that financial data from a legacy system is correctly transformed to meet the new system’s complex chart of accounts. Similarly, in a healthcare data warehouse migration following HL7 standards, precise mapping guarantees that patient data is consistently and accurately structured for regulatory compliance and clinical analysis. Without this guide, teams are left to make assumptions, which inevitably leads to errors that are costly and time-consuming to fix post-migration.

Performance Baseline Establishment

Before a single table is moved, teams must establish a clear performance baseline against which the migrated environment will be measured. Since the inventory becomes a source of truth, every subsequent decision on what to migrate or retire becomes as reliable as the completeness of the catalog, and a thorough audit can help in identifying hidden quality issues like duplicate, missing or inconsistent formatting, and establishes a clear baseline that defines a precise migration scope.

Performance baseline establishment involves capturing current query performance, concurrent user loads, and SLA metrics providing the reference point against which the new environment will be benchmarked post-migration.

Continuous performance optimization and monitoring throughout the migration lifecycle are essential to prevent system degradation, meet user expectations, and realize the full business value of the new platform.

Cost Analysis and Business Case

Migration investment must be justified with a structured financial model — not a general assertion that cloud is cheaper. To secure funding, the business case must move beyond "IT cost savings" and tie the investment to clear financial outcomes, quantifying impact across cost reduction (TCO), performance improvements, risk reduction, and strategic enablement.

The cost analysis should account for both sides of the ledger. On the cost side: current infrastructure spend, licensing, maintenance contracts, and engineering hours consumed by legacy upkeep. On the return side: projected cloud savings, productivity gains from faster analytics, reduced downtime risk, and the strategic value of capabilities the legacy system cannot support at all — real-time ingestion, self-service BI, AI workloads.

A pre-migration impact assessment should provide an accurate analysis of cost and resource requirements and if there are tight deadlines, complex migration scope, and limited resources, performing this assessment as early as possible is essential. Most projects that fail to do so are based on previous project estimates at best, or optimistic guesswork at worst.

Choosing Your Target Platform

Evaluation Framework

There are several major cloud platforms that you can choose from, and each major cloud platform approaches warehousing differently. Each migration approach: cloud-native, lakehouse, or legacy modernization, comes with trade-offs in scalability, control, and complexity.

The only reliable way to compare platforms is to model your specific workload — define your monthly query volume, total data stored, and query scanning patterns, then use vendor calculators and free trials to test against your actual data before committing.

Evaluation Criteria at a Glance

Platform Comparison Overview

The market currently divides into three broad categories: the established cloud-native warehouses (Snowflake, BigQuery, Redshift) optimized for managed analytics at scale; the lakehouse platforms (Databricks, Microsoft Fabric) built for unified data engineering, science, and AI; and legacy modernization platforms for organizations that need enterprise-grade performance without a full architectural overhaul. The right choice depends on workload profile, cloud strategy, and team capability — not vendor marketing.

Migration Strategy & Planning

There is no single “correct” way to migrate a data warehouse. The right approach depends on business priorities, risk tolerance, technical debt, and timelines. In practice, most organizations combine multiple methodologies—but understanding each approach helps define a clear strategy.

Migration Methodologies

1. Lift-and-Shift (Rehosting)

Lift-and-shift moves an existing data warehouse to the cloud with minimal changes to schema, pipelines, or transformation logic. The goal is speed—getting off legacy infrastructure quickly without disrupting existing workloads.

In practice, this often includes small adjustments to make workloads compatible with the target platform. For example, Snowflake promotes a “Lift, Adjust, and Shift” approach, where organizations first migrate historical data as a point-in-time snapshot (a “golden copy”), then transition live ingestion pipelines using ETL or ELT. Deeper redesign is deferred unless the existing system has major architectural issues.

Other platforms follow similar patterns:

- AWS Redshift Migration Service (DMS) supports bulk data transfer and replication with minimal transformation.

- Google BigQuery Data Transfer Service enables straightforward ingestion from legacy systems.

Best for: organizations that need to exit legacy systems quickly (e.g., costly on-prem Teradata or Oracle warehouses).

Trade-off: fast migration, but existing inefficiencies and technical debt are carried forward.

2. Big Bang Migration

A big bang migration moves all major data, pipelines, and workloads in a single cutover event. Once the new system is live, the legacy environment is decommissioned almost immediately. This approach requires extensive upfront testing, validation, and coordination across teams, because there is little room for error during the cutover window.

Example:

A mid-sized SaaS company migrating from an on-prem PostgreSQL warehouse to BigQuery may choose a weekend cutover, migrating all datasets and switching dashboards at once to avoid running dual systems.

Best for: For startups or small-size organizations with a modest amount of data and the possibility of taking a complete break-in operation.

Tradeoff: faster end state, but much higher operational risk.

3. The Trickle Method (Parallel)

In the trickle method or a parallel run, you run both legacy and cloud systems simultaneously during a transition period, and this is advantageous with the lack of downtime. Workloads are gradually migrated and validated in the new environment while the old system remains active as a fallback.

This approach reduces risk significantly because teams can compare outputs between systems and validate business logic before fully switching over. It also allows teams to build expertise in the new platform incrementally.

Example:

A fintech company migrating to Snowflake may run both its legacy warehouse and Snowflake in parallel for several months, validating financial reporting outputs before fully cutting over.

Best for: If you are a medium or large enterprise with a 24/7 lifecycle that can’t afford a total shutdown or downtime is not acceptable, the trickle approach would be the best choice.

Tradeoff: higher temporary cost and complexity due to running dual environments, and requires significant synchronization efforts and close supervision of what has already been transferred and what is still waiting for its turn.

4. Phased Migration (Incremental)

A phased migration breaks the migration into smaller units, such as by business domain, dataset, or pipeline layer (raw → staging → marts). Each phase is completed and validated before moving to the next.

This approach reduces risk and makes the migration more manageable, especially for large organizations with many dependencies.

Matia supports phased migration by letting teams move ingestion workflows incrementally while validating reliability and data quality inside the same platform. Its real-time CDC and fast replication keep source and target systems in sync throughout the transition.

Example:

A healthcare organization might migrate patient data pipelines first, followed by billing systems, and then analytics dashboards—ensuring each domain is stable before proceeding.

Best for: Works well for moderate-size databases that have close to no interdependencies. Gives users an opportunity to take novelties in their stride by small volumes, which spells less adaptation stress.

Tradeoff: slower migration timeline and requires strong coordination to know the dependencies to prevent shutting down the whole system while migration is underway.

5. Refactoring or Re-architecting

Refactoring involves redesigning the data warehouse to align with modern architecture and business needs. This includes rebuilding schemas, rewriting ETL/ELT pipelines, redefining data models, and improving governance, observability, and performance.

This approach is often paired with modern tools:

- dbt for modular, testable transformations

- Databricks or Snowflake for scalable compute and storage

- Delta Lake / Iceberg for lakehouse architectures

Refactoring is the most resource-intensive approach, but it delivers the greatest long-term value by eliminating legacy inefficiencies and enabling advanced analytics and AI use cases.

Example:

A logistics company moving to Databricks may redesign its entire pipeline from batch ETL to streaming ingestion, enabling real-time shipment tracking and predictive optimization.

Best for: organizations using migration as a full modernization initiative.

Tradeoff: highest effort, but strongest scalability, performance, and data reliability.

6. Hybrid Migration

A hybrid approach combines multiple methodologies based on workload criticality and business priorities. This is the most common strategy in real-world migrations.

For example:

- Lift and shift historical data for speed

- Run parallel systems for critical reporting

- Refactor high-value pipelines for performance and scalability

This approach allows teams to balance speed, risk, and modernization.

Best for: complex environments with mixed priorities.

Tradeoff: flexible and practical, but requires strong planning and governance.

Workload Prioritization

Not all data assets are equal, and treating them as such is one of the most common reasons migrations overrun.

Prioritization should be based on three criteria:

- business criticality

- migration complexity

- downstream dependencies

Assets that support critical operations or decision-making move first; those with the highest dependency counts — BI reports, transformation workflows — are sequenced to unblock the most downstream consumers as early as possible. Pilot migrations with non-critical workloads should always precede mission-critical ones — they validate the technical approach early and surface dependency gaps when they are cheapest to resolve. Non-critical and static datasets move first, followed by operational databases and workflows, with full legacy parity validation as the final phase before cutover.

Team Structure and Roles

A successful migration is as much an organizational effort as a technical one. Cloud architects, data engineers, DBAs, ETL specialists, QA analysts, security leads, and business stakeholders each own a distinct slice of the program: strong project governance and communication coordinate them and prevent rework. Identifying who needs to be involved and aligning them before migration begins is as important as the technical plan itself.

The first step is building a stakeholder register: a comprehensive list of all stakeholders with their roles, responsibilities, contact details, and communication preferences. This becomes the operational backbone of program governance — clarifying ownership, surfacing gaps in accountability, and ensuring every team member knows who to escalate to when issues arise.

Establishing consistent communication channels across IT, data engineering, business units, and executive decision-makers is non-negotiable. Regular sessions with both data stakeholders and technical teams should be scheduled from the outset — not reactively — to surface concerns early, align on expectations, and keep all parties moving at the same pace.

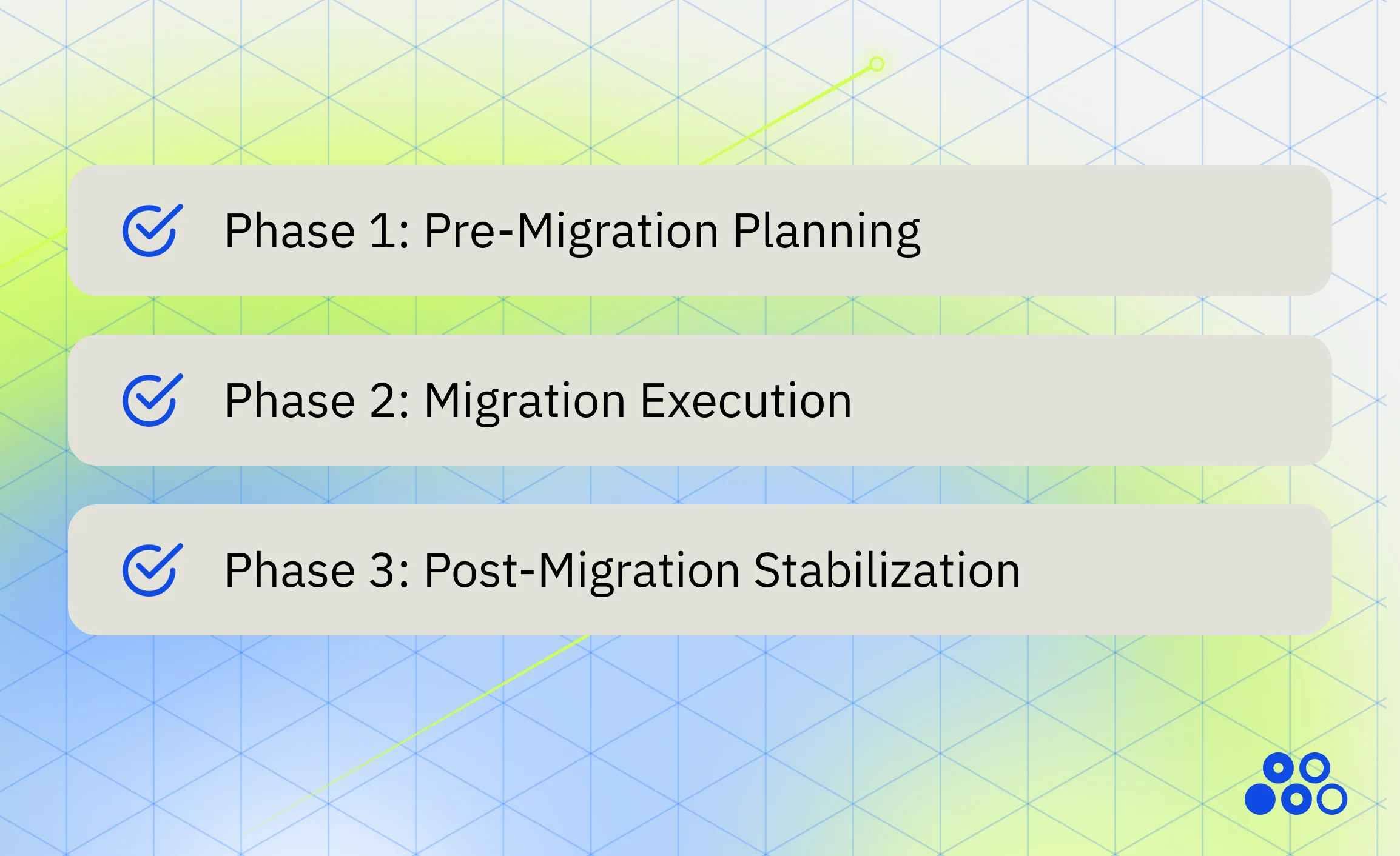

Timeline and Milestones

The timeline for data migration depends on the type, volume and complexity of the data being migrated, as well as issues you encounter around data cleanliness and compatibility. Because migration timelines directly impact business operations, it's crucial to set realistic expectations among stakeholders before embarking on a migration project.

Phase 1: Pre-Migration (3–6 Months)

Gartner reports 60% of data warehouse migrations exceed planned timelines due to weak data profiling and governance — the pre-migration phase is where that failure either begins or is prevented:

- Vendor evaluation, platform selection, and procurement

- Team training on the target platform and migration tooling

- Security and compliance approvals — GDPR, HIPAA, SOC 2

- Pilot project execution and validation on non-critical workloads

- Data inventory, dependency mapping, and schema assessment

Phase 2: Migration Execution (2–8 Months)

A phased execution approach moves data gradually by domain — finance first, followed by HR, marketing, and operations — reducing risk and allowing teams to resolve issues before more complex workloads are migrated:

- Initial full data loads and schema conversion

- ETL/ELT pipeline migration and application integration

- Performance optimization and query tuning on the target platform

- Parallel validation — comparing source and target outputs before cutover

- User acceptance testing and end-user training

Phase 3: Post-Migration Stabilization (1–3 Months)

Migration does not end at cutover. Continuous monitoring and optimization post-migration are essential for long-term success

Performance monitoring against pre-migration baselines:

- Issue resolution and pipeline refinement

- Cost governance and cloud spend optimization

- Documentation updates and knowledge transfer to operational teams

- Decommissioning of legacy systems once stability is confirmed

Success Measurement Milestones:

- Use pre-migration impact assessment for accurate cost and resource analysis.

- Define project phases, from data assessment to testing and validation.

- Allocate resources and assign responsibilities for each phase.

- Plan for contingencies and potential delays in case of unforeseen issues or complications.

- Define precise workflows for reaching milestones.

- Address resource gaps and ensure clarity in task sequence.

- Performance benchmarks comparing old versus new system response times

- Cost reduction targets, including infrastructure and operational savings

- User satisfaction scores from business stakeholders and analysts

- Data quality metrics demonstrating migration accuracy and completeness

Data warehouse migration is often framed as a technical upgrade, but in practice, it is a strategic reset. Planning determines whether migration becomes a controlled transformation or an expensive disruption. The organizations that succeed are not the ones that move fastest, they are the ones that understand what they are moving, why it matters, and how it connects to the business. Clear objectives, strong governance, and a realistic assessment of technical and organizational readiness are what separate successful migrations from failed ones.

This phase is where trade-offs are defined: speed versus risk, lift-and-shift versus modernization, short-term continuity versus long-term scalability. Getting these decisions right upfront reduces complexity later and prevents costly rework during execution.

Check out Part 2 of this series, where we move from strategy to execution—focusing on how to migrate, validate, and operationalize the new system.

.svg)

.png)